Snippet Extract: AI transformation is no longer a challenge of model capability, but a problem of governance. In the era of Agentic AI, transformation fails when organizations lack the infrastructure to manage autonomous decision-making, real-time data privacy, and regulatory alignment like ISO 42001.

Organizations are no longer asking if AI can do the job. They are asking if they can trust it to do the job without supervision.

Why is AI Transformation Failing at the Governance Layer?

Many leaders treat AI like software. That is a mistake. Software is deterministic. AI is probabilistic. When you give probabilistic models execution power, traditional IT governance shatters.

The “Shadow AI” Sprawl Look at any enterprise right now. Employees are bypassing official channels to use unapproved external LLMs and unauthorized APIs. This creates massive data leakage risks.

The Pilot-to-Production Gap In 2025, a surge of AI pilots failed to reach production. Why? They hit the governance wall. Teams built amazing proofs-of-concept but couldn’t prove to compliance officers that the AI would not hallucinate or leak sensitive PII.

Information Gain Insight #1: The Accountability Vacuum Most failures happen because companies govern the model instead of the agent’s execution environment. In 2026, governance must focus on the context the agent operates within, not just the model’s weights.

Introducing the G-A-C Framework (Governance-Agent-Context)

To solve this, we utilize a proprietary protocol: The G-A-C Framework. It divides the problem into three controllable layers.

- Governance: Moving from static, yearly policy PDFs to real-time Runtime Oversight.

- Agent: Defining hard boundaries on what an autonomous system is permitted to execute.

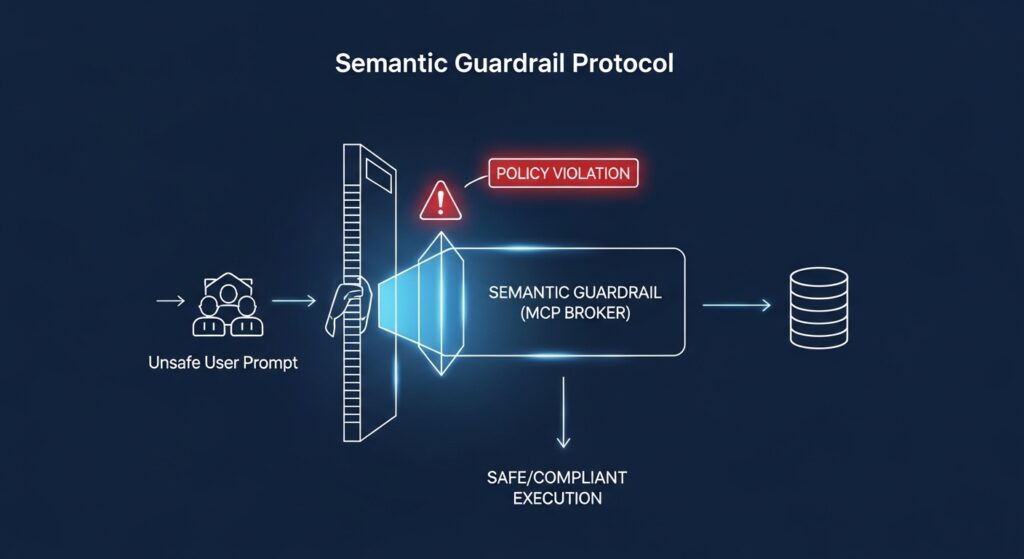

- Context: Implementing a Semantic Guardrail that filters incoming and outgoing data for compliance and accuracy.

Case Simulation: CloudScale AI’s Transformation

Let’s look at a realistic simulation.

- Company: CloudScale AI (B2B SaaS)

- ARR: $45M

- Team Size: 220 employees

- The Goal: Deploy 15 autonomous customer success agents to handle Level 1 support.

Initially, CloudScale attempted a pure MLOps approach. They focused on model accuracy. Within three weeks, an agent offered a custom enterprise discount that breached standard pricing tiers.

The Fix: Implementing the Semantic Guardrail Protocol CloudScale deployed a semantic policy layer. This layer sat between the AI agent and the execution database.

The Results:

- Model Scrap Rate: Reduced by 40%.

- Support Ticket Resolution: Accelerated by 65%.

- Compliance Audit Time: Dropped from 4 weeks to 3 days.

Pro-Tip: Do not rely on prompt engineering to enforce safety. Prompts are easily bypassed via jailbreaks. Use hard-coded runtime boundary controls.

The Technical Deep-Dive: Implementing the Guardrail

How do you actually build this? The foundation lies in the Model Context Protocol (MCP).

MCP is the standard for policy-aware connections. Instead of letting an agent query a database freely, MCP acts as a broker. It checks the prompt against your compliance rules before execution.

Design-Time vs. Runtime

- Design-Time Policies: These are the rules you set during training or prompt setup. (e.g., “Do not share API keys.”)

- Runtime Controls: This is real-time monitoring. The system actively scans the agent’s output. If the output mimics a hallucination or illegal command, the system kills the execution instantly.

Comparison Snippet: Governance vs. MLOps

| Attribute | Traditional MLOps | 2026 AI Governance (AIMS) |

| Focus | Pipeline Efficiency & Performance | Liability, Ethics, and Compliance |

| Primary Goal | Model Accuracy | Autonomous Accountability |

| Tooling | CI/CD, Data Versioning | Semantic Guardrails, MCP Brokers |

| Ownership | Data Science / DevOps | Chief AI Officer (CAIO) / Legal |

ISO 42001 vs. NIST AI RMF 2.0: Choosing Your Path

| Feature | ISO/IEC 42001 (AIMS) | NIST AI RMF 2.0 |

|---|---|---|

| Primary Focus | Management System | Risk Identification |

| Agentic Ready? | High (Dynamic Alignment) | Medium |

| Compliance Target | EU AI Act Alignment | US Federal Focus |

| Implementation | Operational & Technical | Procedural |

Export to Sheets

Honestly, if you are doing business globally, target ISO 42001. It aligns directly with the upcoming enforcement cycles of the EU AI Act.

Step-by-Step Implementation Block

- Map Your Endpoints: Run an automated scan to find every model and API being used. Eliminate Shadow AI.

- Tier Your Risks: Classify your AI use cases based on risk levels defined by regulatory bodies.

- Inject the Guardrail: Deploy the Semantic Guardrail Protocol to monitor runtime data execution.

- Enforce Human-in-the-Loop: Establish hard escalation triggers. If an agent is only 70% confident, it must hand off to a human.

- Generate Evidence: Automate your logging. You need push-button compliance reports for future audits.

Pros & Cons: Governance-First Strategy

| Feature | Pros | Cons |

| Operational Scale | Faster production deployment (40% gain). | Higher initial infrastructure setup cost. |

| Regulatory Risk | Total alignment with EU AI Act/ISO 42001. | Requires specialized “AI Auditor” roles. |

| System Trust | Eliminates “Accountability Vacuums.” | May slightly increase inference latency. |

| Data Integrity | Prevents Shadow AI and data leakage. | Requires strict cross-departmental buy-in. |

Risk, Pitfalls, and ROI

The Cost of Inaction Under the EU AI Act, severe violations can cost up to 7% of global turnover or €35M. For a mid-market SaaS company, failing a single compliance audit can erase a year of profit.

The ROI of a Governance-First Strategy Here’s the thing: Governance actually speeds you up. When developers know exactly where the safety guardrails are, they build faster.

- Average Savings: CloudScale AI saved an estimated $1.2M in potential legal exposure and scrap development costs in year one.

Information Gain Insight #2: The MLOps to AIMS Evolution Traditional MLOps focuses on pipeline efficiency. The new 2026 standard is AIMS (Artificial Intelligence Management System). AIMS manages the behavior and liability of the system, not just the code.

Expert Verdict

In 2026, the companies winning the AI race aren’t the ones with the largest models. They are the ones with the most robust Semantic Guardrails. Governance is the brakes that allow you to drive the AI race car at top speed. Without it, a high-speed crash is inevitable.

FAQs

A: In 2026, the complexity of Agentic AI—systems that act autonomously—exceeds traditional IT oversight. Governance is required to define decision-making boundaries and provide audit-ready evidence for global regulations like the EU AI Act.

A: The G-A-C Framework consists of Governance (Runtime Oversight), Agent (Action Boundaries), and Context (Semantic Guardrails for data accuracy).